The RadioStage app is a bespoke software solution for remote live radio theatre productions. General communication platforms like Zoom and Discord lack the granular audio control and minimal latency essential for seamless remote collaboration and performance in this artistic context.

I also wanted to explore real-time audio transmission technologies, particularly WebRTC, which is widely used in video conferencing but less so in specialised applications like this. I also used this project as a means to perform qualitative user research and user-guided design.

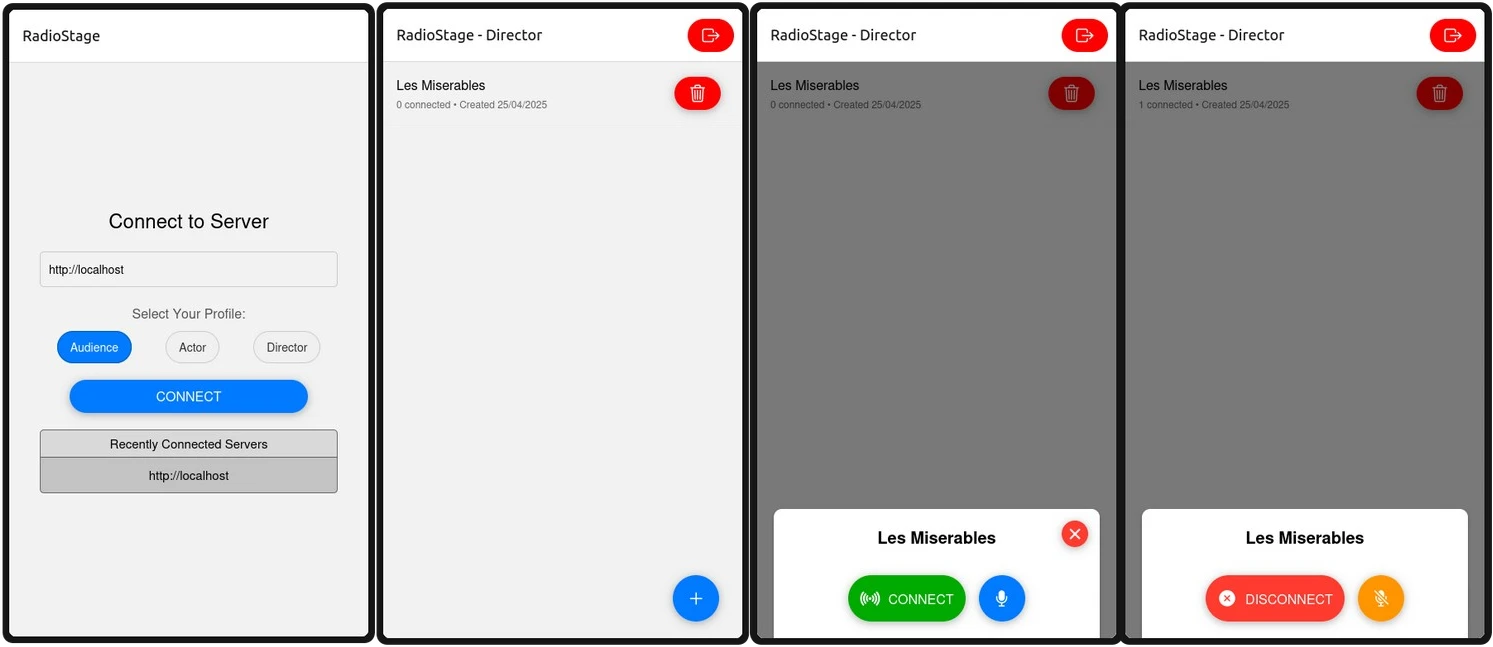

Core Functionality and Design

The application's design was tailored to the unique workflow of remote radio play direction and performance. Key functional objectives included role-based access for directors, actors and audience members, stage management to control which sounds are included in the final output, and a director talkback channel for private communication between the director and actors. I also wanted to ensure the application delivered low-latency performance, with the bar to clear being 500ms from mouth to ear, which is generally considered the upper limit for real-time communication without noticeable lag.

A large focus was on the UI/UX design, which was informed by user research and feedback from potential users. The interface was designed to be intuitive and user-friendly, with clear visual cues for different roles and functionalities. For example, actors had a simple interface for managing their audio input and stage presence, while directors had more complex controls for managing the overall production.

Implementation

I built the frontend using React Native with Expo for cross-platform compatibility. The backend was developed in Go for high performance and efficient concurrency management. In order to transmit audio data between audience members, actors and directors, WebRTC was used for low-latency, peer-to-peer audio transmission, and WebSocket for signalling and control. The server was deployed and hosted on a Google Compute Engine VM.

Evaluation demonstrated the application successfully met its low-latency requirement. Testing yielded average mouth-to-ear latencies of 219ms in a local network and 414ms when using a US-based VM. Both results were within the 500ms target threshold, which even though it wasn't the main goal I'm very happy about achieving solely since it feels useful in a realistic setting. If I had more time with it, I'd ideally cut the latency even further down by transmitting low quality audio between actors and directors and high quality audio only to the audience, then delaying the final mix by a few seconds and using buffering to ensure the final output (that only the audience hears) is paced correctly. This would allow for productions with more complex sound design and tightly choreographed dialogue to be performed without the risk of audio dropouts or lag affecting the performance quality.

Project Reflection

One of the most significant learning experiences was the initial use of WebSocket for audio transmission, which proved to be a really dumb idea, since it wasn't designed for real-time communication (I can't think of a single protocol fit for that purpose…). This approach resulted in a noticeable lag, averaging about 1.5 seconds, and silent gaps, rendering it unsatisfactory for real-time communication. After looking into the problem, I found WebRTC, which natively supports real-time communication. Switching to WebRTC significantly improved performance, reducing latency and eliminating audio gaps.

Overall, RadioStage was a rewarding project that allowed me to explore real-time audio technologies and user-centred design in a specialised context. The application effectively addressed the needs of remote radio theatre productions, providing a tailored solution that general-purpose platforms could not offer.